Data Science Weekly - Issue 646

Curated news, articles and jobs related to Data Science, AI, & Machine Learning

Issue #646

April 09, 2026

Hello!

Once a week, we write this email to share the links we thought were worth sharing in the Data Science, ML, AI, Data Visualization, and ML/Data Engineering worlds.

And now…let’s dive into some interesting links from this week.

Editor's Picks

Fast and Gorgeous Erosion Filter

This blog post and the companion video both explain an erosion technique I’ve worked on over the past eight months. The video has lots of elaborate animated visuals, and is more focused on my process of discovering, refining, and evolving the technique, while this post has a bit more implementation details on the final iteration….

AI Assistance Reduces Persistence and Hurts Independent Performance

Through a series of randomized controlled trials on human-AI interactions (N = 1,222), we provide causal evidence for two key consequences of AI assistance: reduced persistence and impairment of unassisted performance. Across a variety of tasks, including mathematical reasoning and reading comprehension, we find that although AI assistance improves performance in the short-term, people perform significantly worse without AI and are more likely to give up. Notably, these effects emerge after only brief interactions with AI (approximately 10 minutes). These findings are particularly concerning because persistence is foundational to skill acquisition and is one of the strongest predictors of long-term learning…Ask HN: Any interesting niche hobbies?

I’m looking for something novel and interesting, that isn’t absolutely crowded that I could meaningfully contribute to…

What’s on your mind

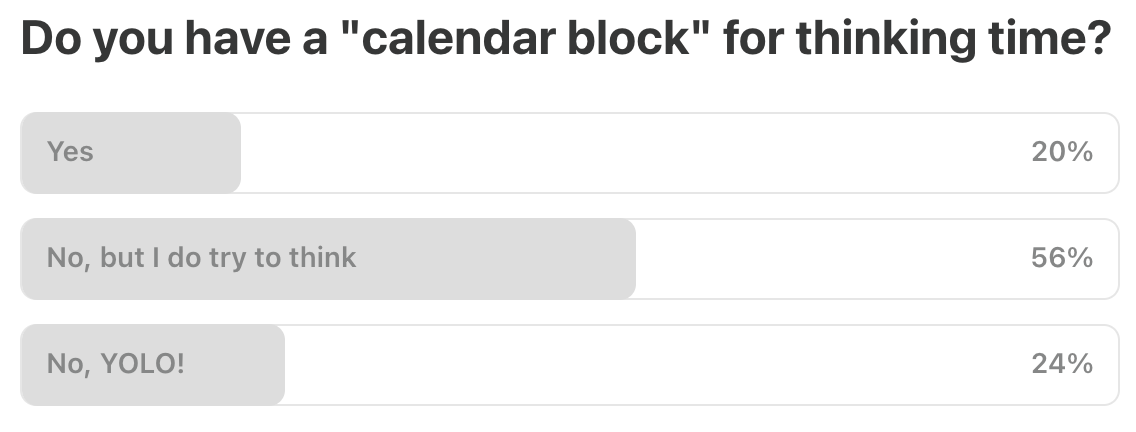

This Week’s Poll:

.

Last Week’s Poll:

.

Data Science Articles & Videos

What Category Theory Teaches Us About DataFrames

Every dataframe library ships with hundreds of operations. pandas alone has over 200 methods on a DataFrame…Without a framework for telling them apart, you end up memorizing APIs instead of understanding structure…I ran into this question while building my own dataframe library. I needed to decide which operations were truly fundamental and which were just surface-level variations. That search led me to Petersohn et al.’s Towards Scalable Dataframe Systems…They analyzed 1 million Jupyter notebooks, cataloged how people use pandas, and proposed a dataframe algebra: a formal set of about 15 operators that can express what all 200+ pandas operations do…I’ve been scraping thousands of U.S. data science jobs for the past couple of months and writing about the findings in my newsletter…At some point, I figured the dashboard was more useful than anything I was writing, so I decided to open-source it…Here’s what it covers:

Top skills companies are actually hiring for, ranked by frequency

Skills broken down by category (ML/DL, GenAI, Cloud, MLOps, etc.)

What % of roles now require AI skills, broken down by seniority level

Salary premium for candidates with AI skills

An interactive explorer where you can browse individual postings with matched skills highlighted

The skill extraction is built on around 230 curated keyword groups, so it’s pretty granular…

Modern SQLite: Features You Didn’t Know It Had

Working with JSON data…Full-text search with FTS5…Analytics with window functions and CTEs…Strict tables and better typing…Generated columns for derived data…Write-ahead logging and concurrency…Quantization from the ground up

Qwen-3-Coder-Next is an 80-billion-parameter model, 159.4 GB in size. That’s roughly how much RAM you would need to run it, and that’s before thinking about long context windows. This is not considered a big model. Rumors have it that frontier models have over 1 trillion parameters, which would require at least 2TB of RAM. The last time I saw that much RAM in one machine was never. But what if I told you we can make LLMs 4x smaller and 2x faster, enough to run very capable models on your laptop, all while losing only 5-10% accuracy. That’s the magic of quantization…The Git Commands I Run Before Reading Any Code

Five git commands that tell you where a codebase hurts before you open a single file. Churn hotspots, bus factor, bug clusters, and crisis patterns….The first thing I usually do when I pick up a new codebase isn’t opening the code. It’s opening a terminal and running a handful of git commands. Before I look at a single file, the commit history gives me a diagnostic picture of the project: who built it, where the problems cluster, whether the team is shipping with confidence or tiptoeing around land mines….Six (and a half) intuitions for KL divergence

KL-divergence is a topic which crops up in a ton of different places in information theory and machine learning, so it’s important to understand well. Unfortunately, it has some properties which seem confusing at a first pass (e.g. it isn’t symmetric like we would expect from most distance measures, and it can be unbounded as we take the limit of probabilities going to zero). There are lots of different ways you can develop good intuitions for it that I’ve come across in the past. This post is my attempt to collate all these intuitions, and try and identify the underlying commonalities between them…Semantic Search Without Embeddings

When I need to stay warm, I search for “long johns” or “long underwear”. But modern outdoors clothing stores label this a “base layer”. For a waterproof jacket I search for “slickers” or a “ski jacket”, not realizing what I should search for is a “shell.” Despite my outdated terminology, search still works. I’m somehow understood and shown the right content. We call this semantic search. When you hear that, you might think embeddings. Today I want to stretch your thinking beyond…In semantic search, content and query map to a shared representation. This space has a similarity function that scores similar items higher than less similar. Let’s walk through an example…Agentic Data Science Done Right: Why AI Coding Agents Make Bad Analytical Decisions - And How to Fix It

AI coding agents write correct code. They make unreliable analytical decisions. That gap between syntactically valid code and statistically sound conclusions is where costly mistakes are made. Decision Lab is an open-source framework that closes this gap by encoding domain expertise, multi-path exploration, and Bayesian uncertainty quantification into every agent run…Eight years of wanting, three months of building with AI

For eight years, I’ve wanted a high-quality set of devtools for working with SQLite. Given how important SQLite is to the industry, I’ve long been puzzled that no one has invested in building a really good developer experience for it…A couple of weeks ago, after ~250 hours of effort over three months on evenings, weekends, and vacation days, I finally released syntaqlite (GitHub), fulfilling this long-held wish. And I believe the main reason this happened was because of AI coding agents…there’s no shortage of posts claiming that AI one-shot their project or pushing back and declaring that AI is all slop. I’m going to take a very different approach and, instead, systematically break down my experience building syntaqlite with AI, both where it helped and where it was detrimental. I’ll do this while contextualizing the project and my background so you can independently assess how generalizable this experience was. And whenever I make a claim, I’ll try to back it up with evidence from my project journal, coding transcripts, or commit history…

Land development math is alchemy: Why cheap land is cheap & how to turn it into gold

Friends often send me links to beautiful parcels of land with prices that are too good to be true…When we look into the underlying development potential, it quickly explains the price: you’re not allowed to build much on it. The land isn’t “entitled.” Entitlement is the legal process by which raw land gets permission to be developed — in California, it’s long, expensive, uncertain, and controlled by local governments. Cheap land is typically cheap because the market has priced in how risky it is to get that permission. The discount isn’t a bargain; it reflects the work (and luck) required to unlock the value. But if you do succeed in entitling land for significant development, its value shoots up to reflect the new permitted supply (assuming the underlying market is strong). I call this “entitlement alchemy”. The dirt doesn’t move, but the piece of paper saying “you may build here” can double the value overnight. In supply-constrained markets, permission to build is a huge portion of the land’s value…R is flexible about classes. Variables are not declared with explicit classes, and arguments of the “wrong” class don’t cause errors until they explicitly fail at some point in the call stack. It would be helpful to keep that flexibility from a user standpoint, but to error informatively and quickly if the inputs will not work for a computation. The purpose of stbl is to allow programmers to specify what they want, and to then see if what the user supplied can work for that purpose.

This approach aligns with Postel’s Law:

“Be conservative in what you send. Be liberal in what you accept from others.”

stbl is liberal about what it accepts (by coercing when safe) and conservative about what it returns (by ensuring that inputs have the classes and other features that are expected)…

Those of you with 10+ years in ML — what is the public completely wrong about? [Reddit]

For those of you who’ve been in ML/AI research or applied ML for 10+ years — what’s the gap between what the public thinks AI is doing vs. what’s actually happening at the frontier? What are we collectively underestimating or overestimating?…Cogsci Book Summaries

A reading list of 79 books about cognitive science and its application to the classroom…For each book there is a pdf of my summary…

.

Last Week's Newsletter's 3 Most Clicked Links

.

* Based on unique clicks.

** Please take a look at last week's issue #645 here.

Cutting Room Floor

.

Whenever you're ready, 3 ways we can help:

Go deeper each week (paid subscription)

Get 3 additional posts per week designed to help you:Statistics → understand the math behind ML

AI Agents → build with modern AI tools

Career → become more valuable at your job

Looking to get a job?

A practical guide to landing your first (or next) data science role, based on thousands of reader questions.

👉 Check out our “Get A Data Science Job” CoursePromote your organization/project/event to ~68,000 subscribers

Sponsor this newsletter and reach a highly engaged data science audience (30–35% open rate).

👉 Reply to this email to learn more

Thank you for joining us this week! :)

Stay Data Science-y!

All our best,

Hannah & Sebastian